What makes one agency able to move artificial intelligence (AI) into mission production in days, while another still navigates the same barriers months or even years later? The answer isn’t technical talent or budget alone. It’s whether infrastructure is intentionally built to support velocity, trust and scale.

As Federal leaders sharpen their focus on operational AI, speed is becoming the key differentiator. Not speed for its own sake, but speed that is purposeful, compliant and aligned with outcomes the public and the mission demand. Moving AI from pilot to production quickly now defines AI leadership in Government.

Rethinking AI Readiness for Federal Missions

Simply demonstrating isolated AI successes is no longer sufficient. Federal agencies are now expected to embed AI into core workflows, drive outcomes and uphold public trust. CAIOs are shifting focus from pilots to impact. That shift requires more than technical oversight; it demands leadership that can drive operational change and enable the workforce to prioritize higher-value work.

Scaling mission-aligned AI requires rethinking old norms. Agencies embracing this shift are achieving faster deployments, greater agility and increased transparency, while others risk getting stuck in pilot mode without the proper foundation.

Building the Foundation for Mission-Aligned AI

Reliable acceleration comes from an intentional foundation, not shortcuts. Agencies moving AI from concept to capability consistently align strategy, data, infrastructure, teams and governance from the outset.

Mission Strategy First

Successful AI efforts prioritize mission impact over technical novelty. Clear goals ensure leadership, infrastructure and resources move in sync toward measurable outcomes.

Data That Moves at Mission Speed

AI needs fast, secure access to trusted structured and unstructured data. Retrieval-based architectures anchored in vetted sources support both performance and privacy.

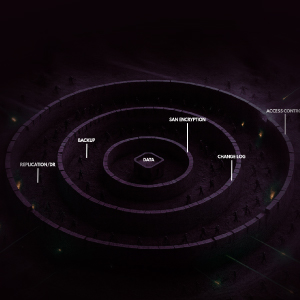

Scalable, AI-Optimized Infrastructure

Traditional IT can’t handle AI’s demands. Agencies moving at mission speed rely on infrastructure optimized for accelerated computing and seamless operations across domains.

Integrated, Agile Teams

Scaling AI takes more than data science. Cross-disciplinary teams aligned on outcomes and able to deliver in agile cycles are key.

Compliance as an Enabler

Built-in transparency and risk management turn compliance into an asset. Agencies that embed governance early shorten ATO timelines and boost public trust.

A Roadmap for Responsible Acceleration

Moving fast without structure is risky. Moving fast with structure enables repeatable, responsible AI delivery. A maturity roadmap helps agencies balance acceleration with alignment to Federal guidance.

1. Baseline Assessment

Clear visibility into current data maturity, infrastructure readiness, governance posture and workforce capabilities helps agencies prioritize investments. Addressing common gaps, like fragmented data pipelines and siloed teams, systematically gives AI initiatives a foundation that scales without risk.

2. Mission-Driven Objectives

Successful AI leaders define what “mission success” looks like in concrete terms. This discipline prevents overbuilding, keeps efforts tied to operational outcomes and builds clear value stories to sustain leadership support.

3. Phased Testing Environments

Test beds and controlled environments provide space to validate AI approaches before full production. These environments foster safe iteration, surface governance needs early and create reusable patterns that accelerate future deployments.

4. Continuous Model Feedback

AI systems must adapt over time, not just at launch. Embedding continuous monitoring, performance tuning and user-driven feedback ensures models remain mission-relevant and trustworthy as operational contexts evolve.

From Use Case to Outcome: What Speed Requires

Agencies moving AI into production quickly focus on the right use cases. Logistics optimization, document analysis and fraud detection are examples of areas where AI at mission speed delivers immediate benefit.

Another key enabler is avoiding unnecessary reinvention. Pre-trained, enterprise-grade models tailored to agency needs dramatically reduce development time.

Modern platforms that support containerized deployment and orchestration of AI microservices across cloud and on-prem environments accelerate this process. Agencies gain flexibility to optimize cost, performance and control based on mission needs. Modular, adaptable architectures also help avoid lock-in and support evolving policy and security requirements.

Security and compliance must be integrated from day one. Systems aligned with FedRAMP, FISMA and Executive Order 14110 requirements to avoid rework that can stall even well-intentioned efforts late in the process.

The Capabilities That Make Rapid AI Possible

To deploy AI at mission speed, infrastructure must deliver scalability, explainability, risk management and collaboration-readiness.

Systems must handle expanding data sources, dynamic mission demands and increased user load without degradation. Models must produce outputs that analysts, operators and oversight bodies can trust and interpret.

Ethical risk management must be proactive, not reactive. Bias checks, audit trails and transparency must be built in from training through ongoing monitoring. Collaboration across agencies and partners must be seamless to maximize impact and minimize duplication of effort.

These capabilities must be grounded in alignment with Federal frameworks such as the AI Risk Management Framework and GSA’s AI guidance. Infrastructure that is “policy-ready” supports faster delivery and greater trust in outcomes.

Leading with Principles That Scale

For Federal AI leaders, the challenge is scaling AI to deliver real mission outcomes while maintaining public trust. Success requires investing in scalable, policy-aligned infrastructure and fostering a culture where speed and governance go hand in hand.

Sustainable, enterprise-wide impact demands leadership that connects vision with execution. The CAIO must drive cross-agency collaboration, operational change and continuous feedback to keep AI responsive to evolving mission needs.

Fast, Mission-Driven AI is Achievable—If You Build for It

Deploying AI in days—not months—is possible when infrastructure, strategy and culture align to support it. Agencies embracing this imperative are setting the pace for responsible, impactful AI in Government.

When AI systems are grounded in mission need, accelerated by the proper infrastructure and governed with intention, they enable something bigger: a Government workforce empowered to focus less on routine tasks and more on the high-impact decisions and public outcomes that matter most.

For Federal AI leaders, the opportunity is now: to move from pilot to production with velocity, governance and trust—and to deliver mission outcomes at a speed that matches the urgency of the moment.