As if gaining visibility into all human and non-human identities wasn’t a big enough task for security teams, adding AI agents into the mix takes identity complexity to a new level. Organizations of all sizes are tackling this new reality, where it feels premature to confidently say they know about all the AI agents running in their environment.

That uncertainty is not a knowledge gap. It is an attack surface.

Gartner’s new report on IAM for AI agents names the real nugget of truth: “Purpose/intent cannot be discovered after the fact by monitoring and observability capabilities.”

That is not just analyst language. It is a fundamental shift in how we need to think about governing agents. You cannot govern agents by watching them after-the-fact. You must know who they are, what they are for, and who is accountable before they run.

The numbers that should change your priorities

Gartner’s data reinforces the urgency. By 2029, over 50% of successful attacks against AI agents will exploit access control weaknesses. By the year before, 90% of organizations that share credentials between humans and agents will need to make significant investments to undo that design.

Those numbers are consequences, not causes. The root cause is structural: IAM maturity for agents is uneven. The Gartner lifecycle maturity assessment makes this visible. Authentication and monitoring capabilities are relatively mature. Identity registration and authorization are not. That gap is the story.

Weak identity registration means the agent was never properly onboarded as an identity. No defined owner. No declared purpose. No documented scope. It has credentials and it is running, but nobody can tell you who built it, what it is supposed to do, or what happens when it breaks. When registration is weak, ownership is unclear. And when ownership is unclear, accountability does not exist.

Weak authorization means the agent has more access than it needs. It can reach databases, APIs, and workflows that have nothing to do with its intended function. Nobody scoped it down because nobody defined what “down” looks like. When authorization is weak, privilege is excessive.

Now combine excessive privilege with autonomy. An agent that can reason, chain tools, and act on its own, with more access than it should have, and no one clearly accountable for what it does. That is the exploitable attack surface. That is the chain revealed in Gartner’s data.

You cannot protect what you cannot see

Before you can govern agents, you need to find them. All of them. Not just the ones your platform team sanctioned. The ones that developers spun up to solve an issue. The ones contractors built. The ones that exist because someone needed to “just get this working.”

We hear this consistently from security teams. As one InfoSec manager at a professional services firm put it: “We do not find out about it until someone goes and does an actual audit of the system.”

Gartner’s assessment confirms it: identity registration is one of the least mature IAM capabilities for AI agents. Most organizations cannot answer the basics: What is this agent supposed to do? Who owns it? What happens when it breaks?

Discovery is not a checkbox. It is the foundation. Without it, every policy you write is based on assumptions, and assumptions do not survive first contact with autonomous agents operating at machine speed.

The identity registration gap

Most organizations are trying to govern agents with the wrong tools. They are monitoring. They are logging. But monitoring tells you what happened. Identity registration tells you what should happen. Authorization enforces the boundary between them.

If your governance model depends on catching problems after they occur, you are always going to be behind.

This is where many organizations reach for familiar tools. IGA platforms can help with registration and lifecycle management. IAM solutions like Okta or Entra ID can register agent identities. These are necessary steps. But they stop there. They can tell you an agent exists and who requested it. They cannot enforce anything at the moment that agent acts.

That is the gap: governance on paper versus enforcement in production.

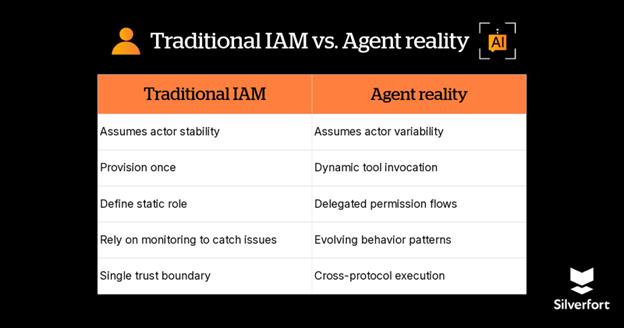

Agents are identities, but not like any you have managed before

The way I read Gartner’s recommendations, there is a unifying thread: treat AI agents like you would treat any identity in your organization. They authenticate. They access resources. They act on behalf of someone. That is not a tool. That is an identity.

But agents are more complex than traditional identities. They are what we call composite identities. They combine the blast radius of service accounts with the unpredictability of human decision-making at machine speed.

Four reasons that make them different:

- They act autonomously, unlike service accounts that execute predefined operations.

- They may inherit human delegation, creating privilege escalation risk.

- They may chain multiple machine identities in a single task.

- They may operate across trust boundaries your IAM system was not designed to handle.

Think about how you onboard an employee. You do not give them admin access on day one. You define their role, their manager, their scope. You review their access as responsibilities change. Agents need that same lifecycle. But right now, most organizations are skipping straight to “give them credentials and hope for the best.”

What runtime enforcement actually looks like

Gartner calls out the authorization gap. But what does closing that gap look like in practice?

Even modern IAM systems, including conditional access and continuous evaluation, were designed primarily to evaluate who is signing in and what that identity is generally allowed to do. Agents introduce a different problem. They do not just sign in. They execute. They invoke tools dynamically. They operate across multiple identity contexts within a single task.

Traditional conditional access evaluates who is signing in and under what conditions. Agent governance must also evaluate what is being executed, at the moment of execution.

Here is what that looks like: an agent is about to call a tool, read from a database, trigger an API, or execute a workflow. Before that happens, there is a decision point. Runtime enforcement evaluates the composite identity: the human owner, the agent itself, the tool credentials, and the defined purpose, all at execution time. Is this agent authenticated? Does it have permission for this specific action? Is this behavior consistent with its intended function?

That is runtime enforcement. Not configuration-time policies that assume the agent will behave as designed. Decisions at execution time, every time.

What Silverfort does differently

If the failure pattern is identity immaturity, then the control point must also be identity. Most AI agent security approaches start at the model or application layer. We start at the identity layer. Because if identity is uncontrolled, everything above is fragile.

Human accountability by design

Every AI agent is explicitly tied to a real human owner in policy. Not informally. Not in documentation. In enforcement logic.

Every action can be traced back to a real chain of accountability: which human owns this agent, what identity the agent is operating under, and what credentials it uses to access resources. That is what we mean by composite identity. And it is what makes enforcement possible before monitoring even begins.

Runtime enforcement at the identity layer

Silverfort enforces at the identity decision point at runtime. For MCP-connected agents, that means sitting in line between the agent and the MCP server. For platform-native agents, enforcement is delivered through native integration, directly within the platform.

Before a tool call executes, we evaluate identity, context, delegation, and policy in real time. If the action exceeds scope, it does not execute. This is not configuration-time IAM. This is execution-time identity enforcement. That distinction matters.

Least privilege that survives autonomy

Static least privilege assumes predictable behavior. Agents break that assumption. They reason. They chain tools. They drift from what they were originally authorized to do. Least privilege must be validated at runtime, not just set at provisioning.

That means if an agent tries to access a resource outside its declared purpose, it gets blocked. If delegated privileges start expanding beyond what was originally scoped, they are contained. This is the same enforcement model we apply to humans and service accounts, now extended to AI agents.

One Identity Security Platform

AI Agent Security is not a standalone product. Agents sit at the intersection of human identities, non-human identities, service accounts, cloud resources, SaaS applications, and protocol layers like MCP. If those domains are secured separately, agents will exploit the seams.

Silverfort unifies this. One policy framework. One observability layer. One enforcement architecture. Across humans, machines, and AI. That is the architectural difference.

Enabling AI innovation without slowing it down

Security leaders are not trying to stop AI adoption. They are trying to make sure it does not outrun their ability to govern it. The organizations moving fastest with AI agents are the ones that figured out early: the right security model is a speed advantage, not a drag.

Cars have brakes so you can drive fast. The same principle applies here.

But, the brakes only work if they’re connected to the same system. Today, most organizations secure human identities in one tool, service accounts in another, and AI agents (if at all) in a third. If those domains are secured separately, agents will exploit the seams.

That’s the reason teams need a unified Identity Security Platform.

- One policy framework means a CISO can define “no agent accesses production data without human approval” once and have it applied across every agent, every platform, every protocol. No per-tool configuration. No coverage gaps.

- One observability layer means when an agent acts, you see the full chain: which human triggered it, which NHI it authenticated with, which tool it called, and what data it touched. Not three dashboards stitched together after the fact, but a single view that makes incident response possible in minutes instead of days.

- One enforcement point means policy is applied at runtime, at the moment of action, not retroactively through quarterly access reviews. When an agent requests access, the decision happens inline. Allow, deny, or step up. Before the action executes, not after.

This is what shifts AI agent security from a governance exercise to an operational capability. Discovery tells you what exists. Registration tells you who owns it. Runtime enforcement tells agents what they’re actually allowed to do, in the moment, every time.

AI agents represent the next frontier of identity. Identity Security must evolve accordingly, from governance alone to continuous, runtime enforcement. Discover what is running. Register who owns it. Enforce at the moment of execution. That is the path.

The Gartner report is worth reading in full. : https://www.silverfort.com/landing-page/campaign/gartner-report-iam-for-agents/.

Want to learn how Silverfort discovers and protects AI agent identities? See AI agent Security in action.

Carahsoft Technology Corp. is The Trusted Government IT Solutions Provider, supporting Public Sector organizations across Federal, State and Local Government agencies and Education and Healthcare markets. As the Master Government Aggregator for our vendor partners, including Silverfort, we deliver solutions for Geospatial, Cybersecurity, MultiCloud, DevSecOps, Artificial Intelligence, Customer Experience and Engagement, Open Source and more. Working with resellers, systems integrators and consultants, our sales and marketing teams provide industry leading IT products, services and training through hundreds of contract vehicles. Explore the Carahsoft Blog to learn more about the latest trends in Government technology markets and solutions, as well as Carahsoft’s ecosystem of partner thought-leaders.

This post originally appeared on Silverfort.com, and is re-published with permission.